E-commerce sales are expected to hit new highs during Black Friday and Cyber Monday this year. As a result, online retailers face the following challenges: standing out in a flurry of compelling promotions; and keeping up with a surge of customer inquiries and engagement. One of the ways that retailers are addressing these challenges is via trustworthy and secure integrations of Large Language Model (LLM) solutions.

Crunching the numbers ahead of the holidays

When it comes to promotional planning for Black Friday and Cyber Monday (BFCM), it is imperative that companies differentiate their offering from an increasing number of competitors and dynamically adjust offers based on actual demand. This differentiation can be accomplished by implementing an LLM-based solution that reasons over previous sales data, forecasted sales, and offer semantics. Prediction Guard (a member of the Intel® Liftoff for startups program) created such a solution for one of their e-commerce customers. The general approach is highlighted below.

Analyzing data with LLMs

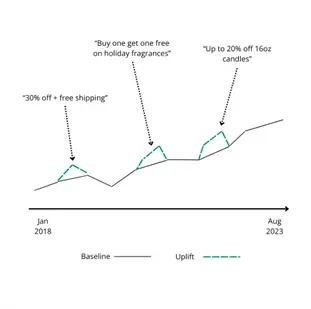

In the example solution, the first step is to analyze historical sales data (e.g., from Shopify) alongside previous offers (e.g., from Mailchimp) to establish historical baseline sales numbers and sales “uplifts” tied to previous offers. The historical offers are extracted from previous marketing campaigns in a secure environment on Intel Developer Cloud (IDC) using a chain of calls to the latest LLMs like Llama 2. These are matched to the historical sales data to determine a sales uplift associated with each promotion (Refer to Figure 1).

Figure 1

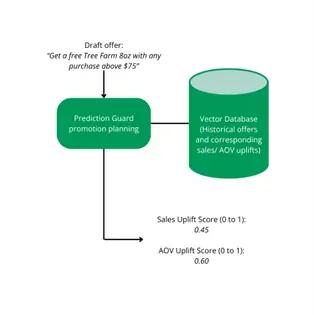

This uplift data is combined with the historical offers and delivery windows of these offers. The historical offers are stored in a database, enabling new draft offers to be semantically matched to the historical offers. The setup allows marketers to draft new offers for BFCM, match them to the closest historical offers during similar delivery times, and calculate a forecasted uplift corresponding to the draft offers (Refer to Figure 2). The forecasts can then be used to evaluate draft offers and optimize messaging.

Figure 2

Generating optimized offers with LLMs

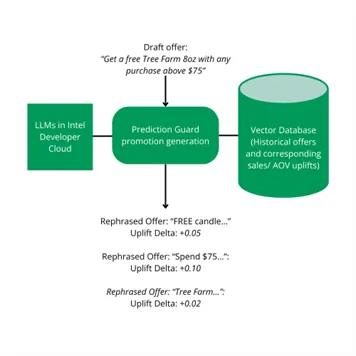

Finally, to enable marketers to be responsive and dynamic, privately hosted LLMs (in Intel Developer Cloud on Gaudi2) proactively rephrase or rewrite draft offers in ways that increase forecasted uplifts. A company can put a draft offer into this promotion planning tool and request that the tool generates similar offers having the same basic parameters (e.g., a certain percentage off) but with wording that will outperform the draft wording. LLMs are great at this task and are able to act in an agentic way reasoning over the historical sales uplift data and iteratively rephrasing the offers to find better wording (i.e., Figure 3).

Figure 3

Given that this promotion planning functionality processes email campaign data and generates offers that need to be private until they are announced, businesses would be hesitant to upload related data into an LLM API using closed, proprietary models with sometimes confusing terms of use. Running these text generations in Intel Developer Cloud with a trusted partner like Prediction Guard allows them to keep their data private without sacrificing magical (and game changing) AI functionality.

Not only that, but this functionality produces results! There are some use cases where this LLM-driven promotional planning strategy was deployed in flash sales leading up to BFCM resulting in record a 40% boost in revenue!

Reliably mitigating increased demand for customer experience interactions

As promotions tend to attract a wave of customers, retailers are forced to deal with increased demand for customer experience (CX) and support interactions. Intriguing and attracting customers during BFCM means responding well to a flood of requests for exchanges, returns, shipping updates, and more.

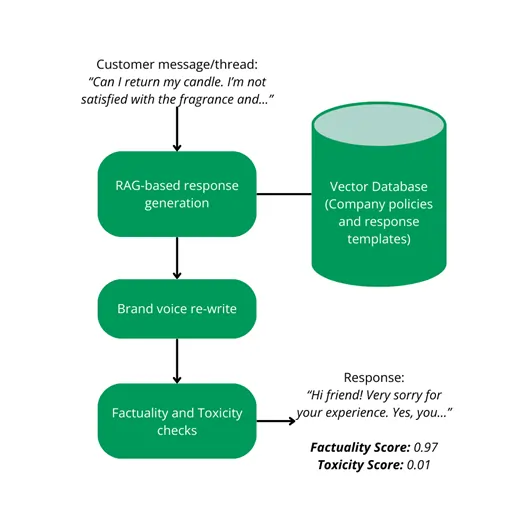

In addition to the promotional planning tools, Prediction Guard has pioneered an LLM-powered customer service system for e-commerce customers leading up to the holiday rush. Using the latest LLMs (again, hosted in IDC), this system can respond to customer email queries accurately in the brand’s unique voice, while seamlessly analyzing the context from prior conversational exchanges. These conversational exchanges are kept private and LLM responses are validated and de-risked using Prediction Guard’s unique factuality and toxicity checks. (Refer to Figure 4)

Figure 4

Customers implementing this type of LLM-based customer response system, are seeing huge boosts in operational efficiency. In certain cases, customers are seeing a 150% increase in the number of responses that they are able to handle. This will be mission critical during BFCM.

As e-commerce continues to define the holiday shopping experience, strategic implementations of AI solutions will be key to converting website traffic into satisfied customers. However, these AI solutions need to be implemented in a trustworthy manner, especially in cases where customer data or sensitive financial information is being processed. Predication Guard’s private, secure, and de-risked LLM solutions deployed on Intel Developer Cloud are meeting this need and demonstrating value this holiday season.